Real-world AI performance differs substantially from in-silico validation on retrospective datasets. Models built in one clinical environment often perform differently in others due to patient population differences or care delivery variations. In dynamic clinical environments, performance drifts over time as patient populations evolve, clinical practices change, or data quality shifts.

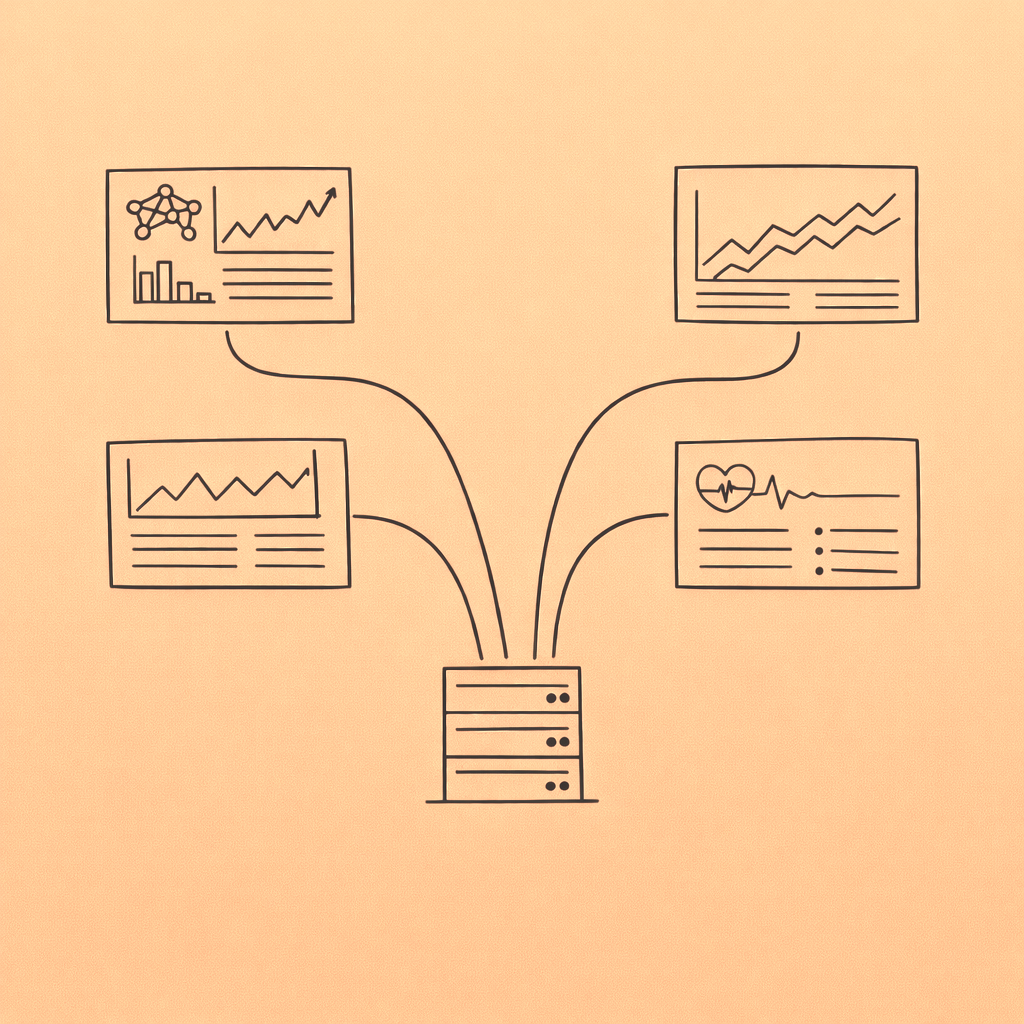

Vega Health monitors AI solutions implemented on the platform continuously, tracking not just aggregate accuracy but performance across patient subpopulations to detect biases that could cause disparate impacts. We monitor data quality of model inputs, because even technically sound models produce inaccurate results when input data is incomplete or implausible.